Instructions to use MirageML/lowpoly-landscape with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use MirageML/lowpoly-landscape with Diffusers:

pip install -U diffusers transformers accelerate

import torch from diffusers import DiffusionPipeline # switch to "mps" for apple devices pipe = DiffusionPipeline.from_pretrained("MirageML/lowpoly-landscape", dtype=torch.bfloat16, device_map="cuda") prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k" image = pipe(prompt).images[0] - Notebooks

- Google Colab

- Kaggle

- Local Apps

- Draw Things

- DiffusionBee

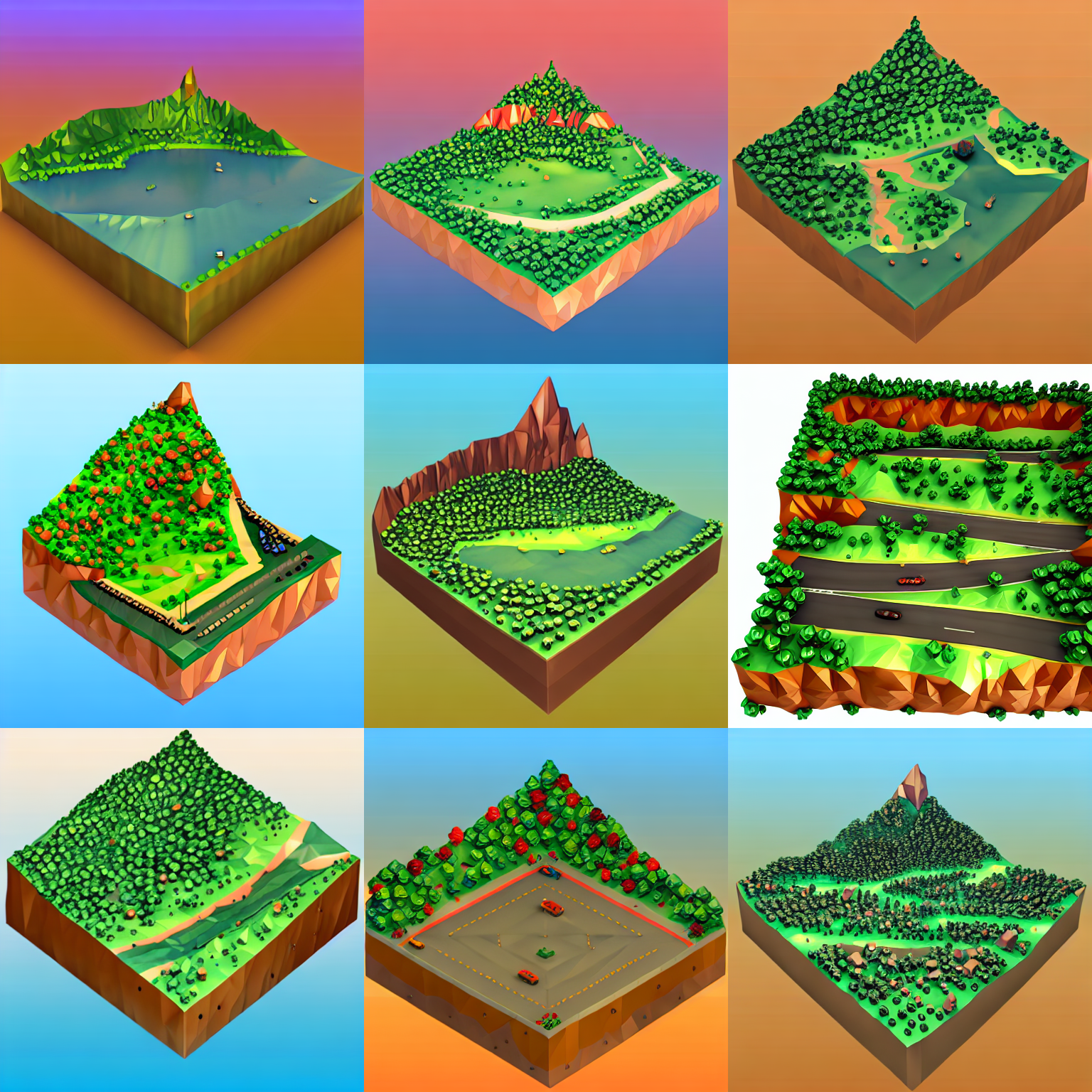

Low Poly Landscape on Stable Diffusion via Dreambooth

This the Stable Diffusion model fine-tuned the Low Poly Landscape concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the instance_prompt: a photo of lowpoly_landscape

Run on Mirage

Run this model and explore text-to-3D on Mirage!

Here are is a sample output for this model:

Share your Results and Reach us on Discord!

- Downloads last month

- 6